Product

IoT Data Hub

An open and expandable data platform for companies that want to make machine data quickly usable and later expand it in a controlled manner for analysis, applications and AI.

Contact usProduct

An open and expandable data platform for companies that want to make machine data quickly usable and later expand it in a controlled manner for analysis, applications and AI.

Contact usTypical triggers

The IoT Data Hub is useful when machine data needs to be quickly usable without having to set up several separate data islands for dashboards, analysis, applications and AI.

Typical triggers are distributed machine data, high integration effort between OT and IT or the desire to quickly create initial visibility with just a few data sources.

The platform forms a common foundation for MDE, dashboards, analysis functions, proprietary applications and integrated AI functions.

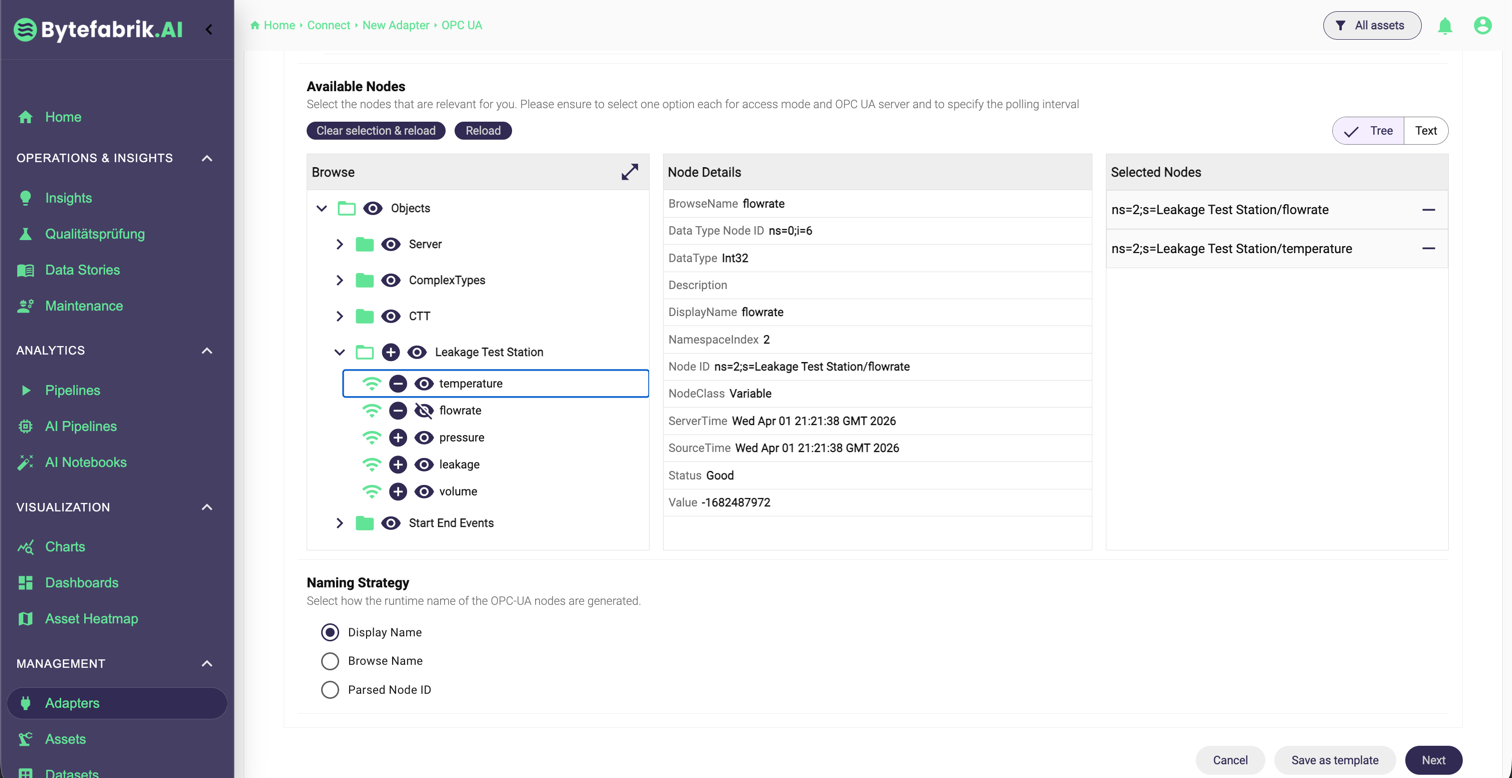

Connectivity

The platform connects controllers, sensors, gateways and store floor systems via specific industrial protocols and reusable integration patterns.

Data management

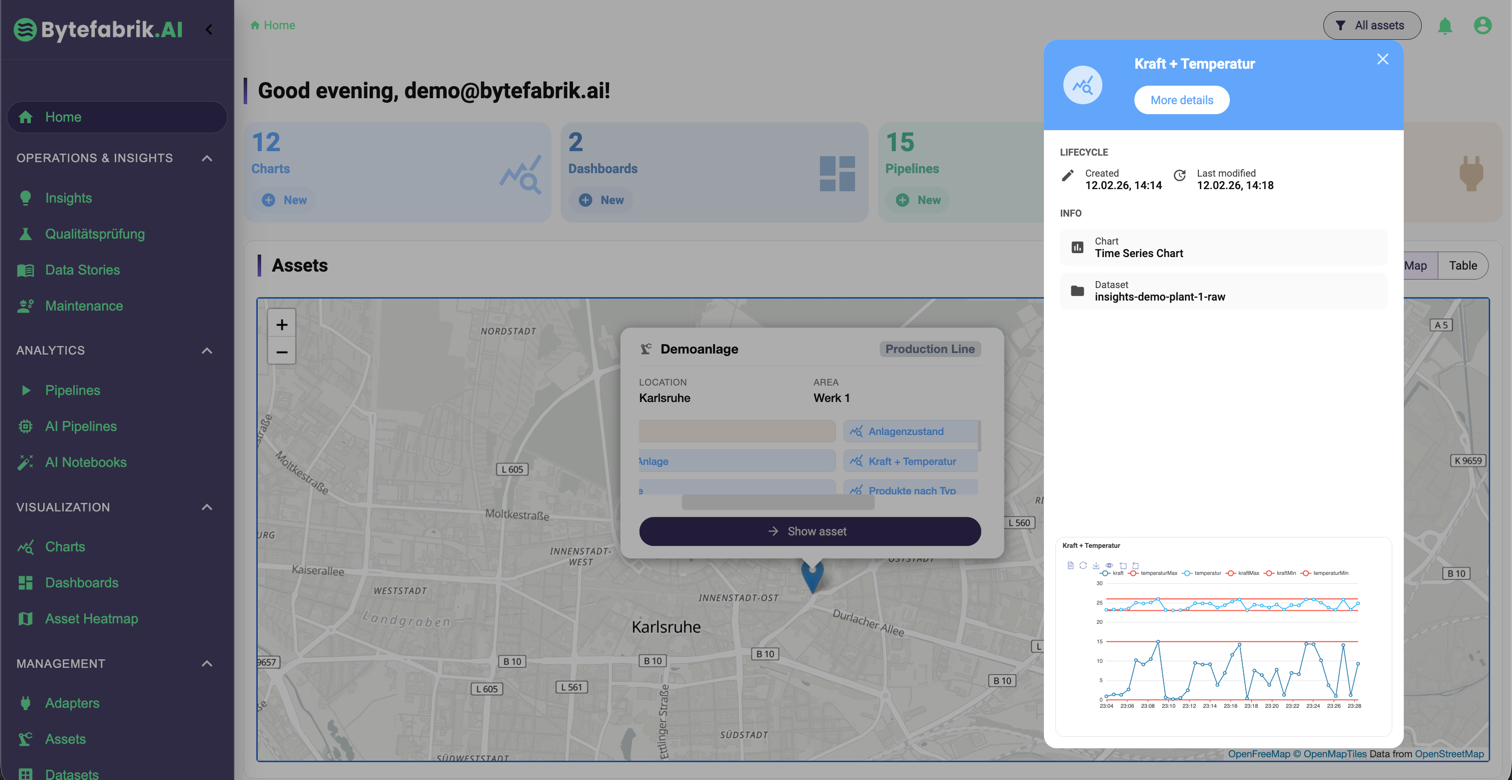

Raw data is transferred into a consistent data model and structured with asset management and semantic description for dashboards, analysis and AI.

On the same platform basis, current data streams, history and AI functions can be shared without additional data storage.

Pipelines, rules and events process ongoing machine data directly and make current statuses usable for the store floor, control center and technical monitoring.

On the same database, historical data, trends and specialist contexts can be combined with dashboards and AI notebooks for in-depth evaluations, while AI pipelines supplement ongoing data streams and operable analysis logic.

Application layer

Dashboards, analysis applications, workflows or your own extensions can be operated on the same database without having to introduce a second platform.

The platform combines data connection, data model, usage layer and operational aspects in a coherent stack.

The IoT Data Hub can be operated as an integrated solution for a quick start or as a distributed architecture with governance and security requirements.

An edge component can be operated close to machines, controllers or cells, collect data locally and synchronize it with the central or cloud instance in a controlled manner.

For small and medium-sized companies, the IoT Data Hub can be used as a comparatively simple, integrated solution for machine data acquisition, dashboards and initial analyses.

For larger companies, the platform supports cross-site architectures with roles, responsibilities, distributed instances and controlled provision of data and applications.

The IoT Data Hub can be started with just a few data sources. Additional locations, applications or AI functions can be added later on the same basis.

At the beginning, the relevant machines, control systems or sensor sources are connected so that the first live data can be displayed without a long lead time.

Assets, signals and specialist contexts are then structured and initial dashboards, charts or analytical views are provided on the same database.

The first analyses, rules or application-specific modules can be used productively and evaluated in operation after just a short time.

If additional lines, locations, specialist applications or AI modules are added, the platform can be expanded in a controlled manner on the same basis.

AI notebooks and AI pipelines

AI Notebooks and AI Pipelines are part of the IoT Data Hub and use the same data, integration and operating basis as connectivity, dashboards and applications. This means there are no separate data islands between platform operation, analysis and AI.

The AI functions are based directly on the existing data model, the connectors and the operating mechanisms of the IoT Data Hub.

AI notebooks are part of the IoT Data Hub as an integrated working environment and help to securely evaluate stored machine data using voice and explainable Python code and prepare it as visualizations, tables or further analyses.

AI pipelines are integrated into the platform and define analyses, monitoring and data harmonization on current machine data. The generated logic can be executed and operated on the same data and operating basis.

Demo

Arrange a demo to get to know the platform, analysis and AI functions based on your questions.